How to Turn Real-World Capabilities Into an Agent Skill

- John Doe

- Agent Skills , Tutorial

- 08 May, 2026

General-purpose AI agents are powerful, but they lack the one thing every team has: procedural knowledge. Your code review checklist, your deployment runbook, your API conventions — none of this is built into any model.

That’s where Agent Skills come in. Introduced by Anthropic in 2025, Skills are organized folders of instructions, scripts, and resources that agents can discover and load dynamically. Think of them as onboarding manuals for your agent.

Building a skill is like putting together an onboarding guide for a new hire. Instead of building fragmented, custom-designed agents for each use case, you specialize a general-purpose agent with composable capabilities by capturing and sharing your procedural knowledge.

In this tutorial, you’ll build a Code Review Skill from scratch — packaging your team’s review standards into a reusable skill that any agent can use.

What Is an Agent Skill?

At its simplest, an Agent Skill is a directory containing a SKILL.md file with YAML frontmatter:

---

name: code-review

description: Review pull requests against team conventions and best practices

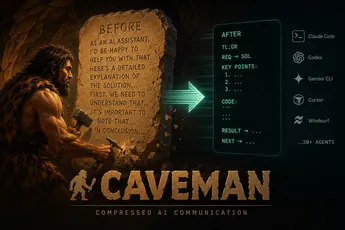

---When the agent starts up, it loads the name and description of every installed skill into its system prompt. This metadata is cheap — always loaded, minimal tokens. The agent uses it to decide whether to load the full skill.

The actual instructions live in the body of SKILL.md, which is only read when the agent determines the skill is relevant. This is the core design principle: progressive disclosure.

Progressive Disclosure

Skills use a three-tier information model:

Tier 1: Metadata (name + description) Always loaded into system prompt. Just enough context for the agent to decide relevance.

Tier 2: SKILL.md body Loaded when the agent determines the skill is relevant to the current task.

Tier 3: Referenced files

Linked from SKILL.md — loaded on demand only when needed. Can include markdown reference docs, scripts, templates, or any other resource.

This means the amount of context bundled into a skill is effectively unbounded. The agent pays tokens only for what it actually uses.

Tutorial: Building a Code Review Skill

Let’s walk through building a skill that guides an agent through reviewing pull requests against your team’s standards.

Step 1: Identify the Gap

Before writing anything, run your agent on a real code review task. Watch where it struggles:

- Does it miss your team’s naming conventions?

- Does it ignore security patterns specific to your stack?

- Does it suggest changes that violate your architecture decisions?

These gaps are your skill’s table of contents.

Step 2: Create the Directory Structure

~/.claude/skills/code-review/

├── SKILL.md

├── conventions.md

├── security-checklist.md

└── review-workflow.mdStep 3: Write the YAML Frontmatter

The name and description are the only signals the agent has for deciding when to load this skill. Be specific — “Review code” is too vague and might trigger during development. “Review pull requests against team conventions” is better.

---

name: code-review

description: >

Review pull requests using team-specific conventions,

security patterns, and architecture guidelines.

Triggers on "review", "PR", "pull request", "code review".

---Step 4: Write the Core Instructions

In the body of SKILL.md:

## Review Process

1. Read the PR description for context on what changed and why.

2. Read `conventions.md` first — all style checks go through these rules.

3. Run `security-checklist.md` against every changed file.

4. Follow `review-workflow.md` for the structural review sequence.

## Output Format

For each issue found, report:

- **Severity**: critical / warning / suggestion

- **File**: path and line number

- **Rule**: which convention or pattern was violated

- **Fix**: specific, actionable suggestionStep 5: Add Reference Files (Tier 3)

Split detailed knowledge into separate files. This keeps the core lean and lets the agent load only what it needs.

conventions.md:

## Naming

- React components: PascalCase

- Hooks: camelCase, prefixed with "use"

- Constants: UPPER_SNAKE_CASE

- Private functions: prefixed with underscore

## Imports

- Group: built-in → external → internal → relative

- Sort alphabetically within each group

- No barrel imports from index.tssecurity-checklist.md:

Check every changed file for:

- SQL queries using string interpolation (use parameterized queries)

- User input rendered without escaping

- Hardcoded secrets or tokens

- Missing authentication on new API routes

- Insecure direct object references (IDOR)review-workflow.md:

## Sequence

1. Convention check (conventions.md)

2. Security scan (security-checklist.md)

3. Architecture review — does the change fit the module structure?

4. Test coverage — are there tests for the new logic?

5. Documentation — are public APIs documented?

## Rules of thumb

- A PR should do one thing. Flag PRs mixing refactoring with feature work.

- Prefer small, focused commits over large monolithic ones.

- If a fix is needed, request it once and verify in the next round.Now the agent reads conventions.md for every review, but only reads security-checklist.md if the diff touches security-relevant code, and only reads review-workflow.md for larger or structural changes. That’s progressive disclosure in action.

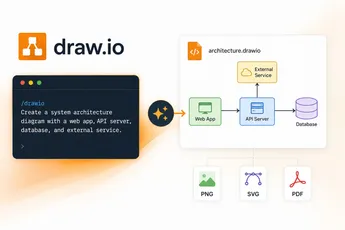

Step 6: Add Scripts for Deterministic Tasks

Some operations are better suited for code than LLM reasoning. Large language models excel at many tasks, but sorting a list via token generation is far more expensive than running a sorting algorithm. Beyond efficiency, many applications need the deterministic reliability that only code can provide.

Include a helper script in your skill directory:

# diff-stats.py

import sys, re

diff_text = sys.stdin.read()

files = re.findall(r'^\+\+\+ b/(.+)$', diff_text, re.MULTILINE)

lines_added = len(re.findall(r'^\+', diff_text, re.MULTILINE))

lines_removed = len(re.findall(r'^-', diff_text, re.MULTILINE))

print(f"Files changed: {len(files)}")

print(f"Lines added: {lines_added}")

print(f"Lines removed: {lines_removed}")

print(f"Scope: {'SMALL' if lines_added < 50 else 'MEDIUM' if lines_added < 200 else 'LARGE'}")Reference it from SKILL.md:

Before reviewing, run `python diff-stats.py` (pipe the diff to stdin)

to assess the PR scope. Adjust review depth based on the result:

- SMALL: full review

- MEDIUM: focus on security and architecture

- LARGE: high-level summary only, flag for human reviewStep 7: Iterate with Your Agent

This is the most important step. Run your agent on real PRs and watch how it uses the skill. When it makes mistakes, ask it to self-reflect:

“You missed the import ordering convention. Review conventions.md again and explain what went wrong.”

Over time, capture these learnings back into the skill files. The skill is never “done” — it evolves with your team.

Developing and Evaluating Skills

Start with evaluation. Identify specific gaps in your agents’ capabilities by running them on representative tasks and observing where they struggle. Then build skills incrementally to address these shortcomings.

Think from the agent’s perspective. Monitor how your agent uses the skill in real scenarios. Watch for unexpected trajectories or overreliance on certain contexts. If the skill never fires, the name or description is wrong. If it fires too often, they’re too broad.

Iterate with Claude. As you work, ask Claude to capture its successful approaches and common mistakes into reusable context within the skill. If it goes off track when using a skill, ask it to self-reflect on what went wrong. This produces context the agent actually needs, instead of what you anticipate upfront.

Structure for scale. When SKILL.md becomes unwieldy, split content into separate files. If certain contexts are mutually exclusive or rarely used together, keeping the paths separate reduces token usage. Code can serve as both executable tools and as documentation.

Cautions

Token management. Every line in a loaded skill consumes context window space. A bloated SKILL.md can crowd out the agent’s reasoning and the user’s conversation. Keep the core lean; push detailed reference material to Tier 3 files.

Naming precision. The name and description are the only signals for triggering. A description like “helps with code” will fire on virtually every request. A description like “review PRs against team style and security conventions” is accurate enough to avoid false positives while still matching the right scenarios. This single field is the most common source of skill failures.

Cross-skill conflicts. Multiple skills can give contradictory instructions — one says “use ESLint,” another says “use Prettier.” Audit your installed skills for compatibility, especially when pulling from community sources.

Security risks. Skills contain instructions and code that agents execute. A malicious skill could exfiltrate data, modify files, or connect to untrusted hosts. Only install skills from trusted sources, and audit every file before first use.

Maintenance debt. Skills are living documents. When your team’s conventions change, the skill must be updated too. An outdated skill is worse than no skill — it confidently enforces wrong rules. Treat skills like code: version them, review changes, and deprecate obsolete ones.

When Not to Use a Skill

Skills are not the right tool for every job:

One-off tasks. If you’re doing something once, don’t package it. Just tell the agent directly.

Real-time data or external APIs. Skills are static files. If you need to query a database, fetch live metrics, or call an API, use MCP (Model Context Protocol) servers instead. MCP handles dynamic tool execution; Skills handle static knowledge and workflows.

Rapidly changing processes. If your convention changes weekly, you’ll spend more time updating the skill than it saves. Let the process stabilize first, then skill it.

Deterministic execution. Skills guide agent behavior — they don’t enforce it. If you need 100% deterministic behavior, write traditional code or a CI check.

Trivial instructions. If it fits in one sentence, put it in the system prompt. Skills have overhead — only create one when the complexity justifies it.

Human-in-the-loop workflows. Skills assume the agent executes autonomously. If every step requires manual approval, a script or CI pipeline is a better fit.

Conclusion

Agent Skills are a deliberately simple concept: a folder, a markdown file, and a principle of progressive disclosure. That simplicity is their strength. It makes them composable, shareable, and maintainable.

The best way to get started is to find one specific gap — one thing your agent consistently gets wrong about your team’s work — and write a skill for it. Not ten skills. One.

Open a directory, write a SKILL.md, and give your agent the context it actually needs.